5.0 The sources and collection of data

In practice, most statistical data exist in official and private records that are compiled as part of the routine of the day-to-day activities of public organizations and private firms. Thus, government departments routinely record figures on such matters as births and deaths, number of accidents, per period, quantities of items exported or imported per period etc. Private firms also maintain continuous records on personnel, sales, expenses, production, etc.

Primary data are those which are collected for the first time and thus original in character, whereas secondary data are those which have already been collected by some other persons and which have passed through the statistical machine at least once. Primary data are in the shape of raw materials to which statistical methods are applied for the purpose of analysis and interpretations. Secondary data are usually in the shape of finished products since they have been treated statistically in some form or the other. Therefore when

data are used for purposes other than those for which they were originally complied, they are known as ‘secondary’ data.

It may be observed that the distinction between primary and secondary data is a matter of degree or relativity only. The same set of data may be secondary in the hands of one and primary in the hands of others. In general, the data are primary to the source who collects and processes them for the first time and are secondary for all other sources who later use such data.

For instance, the data relating to mortality (death rates) and fertility (birth rate) in Kenya published by the office of Registrar of births and deaths are primary whereas the same reproduced by the United Nations Organization (U.N.O) in its United Nations Statistical Abstract become secondary in as far as the later agency (UNO) is concerned.

5.1 Choice between Primary and Secondary Data

Obviously, there are a lot of differences in the method of collection of primary and secondary data. In the case of primary data which is to be collected originally, the entire scheme of the plan starting with the definition of various terms used, units to be employed, type of enquiry to be conducted, extent of accuracy aimed at etc, is to be formulated whereas the collection of secondary data is in the form of mere compilation of the existing data. A proper choice between the types of data needed for any particular statistical investigation is to be made after taking into consideration:

- that nature

- the objective, and

- scope of the enquiry;

- the time and finances (budget) at the disposal of researcher;

- The degree of precision aimed at; when conducting a survey, primary data on the great advantage in that the exact information is obtained. Terms can be carefully defined so that misunderstandings are avoided as far as is possible. In fact the investigations can be geared to cover all the ground considered necessary without having to rely on possibly out of date information. The staff carrying out the investigation can be specifically picked for the purpose in mind. Many business statistics are compiled from secondary data which has certain advantages.

- The information will be obtained more speedily, and at less cost than primary data.

- In addition, no large army of investigators will be required.

Secondary data must, however be used with great care, and the investigator must be satisfied that the data is sufficiently accurate for statistical investigation. It is necessary for the researcher to know the source, the method of compilation and the purpose for which the original investigation was carried out.

Furthermore, information must be available as to the units in which the data is expressed including any change in those units since their original collection, and the degree of accuracy of the results cannot be determined. Secondary data provides an important source of statistical information, particularly when circumstances make it impractical to obtain primary data. It must always, however, be applied in full knowledge of its limitations.

5.2 Modes of data collection

1. Personal Interview

A personal interview (i.e face to face) is a two-way conversation initiated by an interviewer to obtain information from a respondent. The differences in roles of interviewer and respondent are pronounced. They are typically strangers, and the interviewer generally controls the topics and patterns of discussion. The consequences of the event are usually insignificant for the respondent. The respondent is asked to provide information and has little hope of receiving any immediate or direct benefit from this cooperation. Yet if the interview is carried off successfully, it is an excellent data collection technique.

There are real advantages and clear limitations to personal interviewing. The greatest value lies in the depth of information and detail that can be secured. It far exceeds the information secured from telephone and self-administered studies, mail surveys, or computer (both intranet and Internet). The interviewer can also do more things to improve the quality of the information received than with another method.

Interviewers also have more control than with other kinds of interrogation. They can prescreen to ensure the correct respondent is replying, and they can set up and control interviewing conditions. They can use special scoring devices and visual materials. Interviewers also can adjust to the language of the interview because they can observe the problems and effects the interview is having on the respondent.

With such advantages, why would anyone want to use any other survey method? Probably the greatest reason is that the method is costly, in both money and time. Costs are particularly high if the study covers a wide geographic area or has stringent sampling requirements. An exception to this is the intercept interview that targets respondents in centralized locations such as shoppers in retail malls. Intercept interviews reduce costs associated with the need for several interviewers, training and travel. Product and service demonstrations can also be coordinated, further reducing costs. Their cost-effectiveness, however, is offset when representative sampling is crucial to the study’s outcome. Requirements for Success: Three broad conditions must be met to have a successful personal interview. They are;

(1) availability of the needed information from the respondent,

(2) an understanding by the respondent of his or her role, and

(3) Adequate motivation by the respondent to cooperate. The interviewer can do little about the respondent’s information level. Screening questions can qualify respondents when there is doubt about their ability to answer. This is the study designer’s responsibility.

Interviewers can influence respondents in many ways. An interviewer can explain what kind of answer is sought, how complete it should be, and in what terms it should be expressed. Interviewers even do some coaching in the interview, although this can be a biasing factor.

Interviewing technique

At first, it may seem easy to question another person about various topics, but research interviewing is not so simple. What we do or say as interviewers can make or break a study. Respondents often react more to their feelings about the interviewer than to the content of the questions. It is also important for the interviewer to ask the questions properly, record the responses accurately, and probe meaningfully. To achieve these aims, the interviewer must be trained to carry out those procedures that foster a good interviewing relationship.

Increasing Respondent’s Receptiveness The first goal in an interview is to establish a friendly relationship with the respondent. Three factors will help with respondent receptiveness.

The respondents must

(1) believe the experience will be pleasant and satisfying,

(2) think answering the survey is an important and worthwhile use of their time, and

(3) Have any mental reservations satisfied. Whether the experience will be pleasant and satisfying depends heavily on the interviewer.

Typically, respondents will cooperate with an interviewer whose behavior reveals confidence and engages people on a personal level. Effective interviewers are differentiated not by demographic characteristics but by these interpersonal skills. By confidence, we mean that most respondents are immediately convinced they will want to participate in the study and cooperate fully with the interviewer. An engaging personal style is one where the interviewer instantly establishes credibility by adapting to the individual needs of the

respondent.

For the respondent to think that answering the survey is important and worthwhile, some explanation of the study’s purpose is necessary although the amount will vary. It is the interviewer’s responsibility to discover what explanation is needed and to supply it. Usually, the interviewer should state the purpose of the study, tell how the information will be used, and suggest what is expected of the respondent. Respondents should feel that their cooperation would be meaningful to themselves and to the survey results. When this is achieved, more respondents will express their views willingly.

Respondents often have reservations about being interviewed that must be over come. They may suspect the interviewer is a disguised salesperson, bill collector, or the like. In addition, they may also feel inadequate or fear the questioning will embarrass them. Techniques for successful interviewing of respondents in environments they control – particularly their homes – follow.

The Introduction

The respondent’s first reaction to the request for an interview is at best guarded one. Interviewer appearance and action are critical in forming a good first impression. Interviewers should immediately identify themselves by name and organization, and provide any special identification. Introductory letters or other information confirms the study’s legitimacy. In this brief but critical period, the interviewer must display friendly intentions and stimulate the respondent’s interest.

The interviewer’s introductory explanations should be no more detailed than necessary. Too much information can introduce a bias. However, some respondents will demand more detail. For them, the interviewer might explain the objective of the study, its background, how the respondent was selected, the confidential nature of the interview (if it is), and the benefits of the research findings. Be prepared to deal with questions such as: “How did you happen to pick me?” “Who gave you my name?” “I don’t know enough about this.” “Why don’t you go next door?” “Why are you doing this study?”

The home interview typically involves two stages. The first occurs at the door when the introductory remarks are made, but this is not a satisfactory location for many interviews. In trying to secure entrance, the interviewer will find it more effective to suggest the desired action rather than to ask permission. “May I come in?” can be easily countered with a respondent’s no. “I would like to come in and talk with you about X” is likely to be more successful. If the Respondent Is Busy or Away If it is obvious that the respondent is busy, it may be a good idea to give a general introduction and try to stimulate enough interest to arrange an interview at another time. If the designated respondent is not at home, the interviewer should briefly explain the proposed visit to the person who is contacted. It is desirable to establish good relations with intermediaries since their attitudes can help in contacting the proper respondent.

Interviewers

contacting respondents door to door often leave calling or business cards with their affiliation and a number where they can be reached to reschedule the interview. Establishing a Good Relationship The successful interview is based on rapport – meaning a

relationship of confidence and understanding between interviewer and respondent. Interview situations are often new to respondents, and they need help in defining their roles. The interviewer can help by conveying that the interview is confidential (if it is) and important and that the respondent can discuss the topics with freedom from censure, coercion, or pressure. Under these conditions, the respondent can obtain much satisfaction in “opening up” without pressure being exerted.

Gathering the Data To this point, the communication aspects of the interviewing process have been stressed. Having completed the introduction and established initial report, the interviewer turns to the technical task of gathering information. The interview centers on a prearranged questioning sequence. The technical task is well defined in studies with a structured questioning procedure (in contrast to an exploratory interview situation). The interviewer should follow the exact wording of the questions, ask them in the order presented, and ask every question that is specified. When questions are misunderstood or misinterpreted, they should be repeated.

A difficult task in interviewing is to make certain the answers adequately satisfy the question’s objectives. To do this, the interviewer must learn the objectives of each question from a study of the survey instructions or by asking the research project director. It is important to have this information well in mind because many first responses are inadequate even in the best-planned studies.

The technique of stimulating respondents to answer more fully and relevantly is termed probing. Since it presents a great potential for bias, a probe should be neutral and appear as a natural part of the conversation. Appropriate probes (those that when used will elicit the desired information while injecting a limited amount of bias) should be specified by the designer of the data collection instrument. There are several different probing styles:

- A brief assertion of understanding and interest. With comments such as “I see” or “ “uh-huh,” the interviewer can tell the respondent that he interviewer is listening and is interested in more.

- An expectant pause. The simplest way to suggest to the respondent to say more is a pause along with an expectant look or a nod of the head. This approach must be used with caution. Some respondents have nothing more to say, and frequent pausing could

create some embarrassing silences and make them uncomfortable, reducing their willingness to participate further. - Repeating the question. This is particularly useful when the respondent appears not to understand the question or has strayed from the subject.

- Repeating the respondent’s reply. The interviewer can do this while writing it down. Such repetition often serves as a good probe. Hearing thoughts restated often promotes revisions or further comments.

- A neutral question or comment. Such comments make a direct bid for more information. Examples are: “How do you mean?” “can you tell me more about your thinking on that?” “Why do you think that is so?” “Anything else?”

- Question clarification. When the answer is unclear or is inconsistent with something already said, the interviewer suggests the respondent failed to understand fully.

Typically of such probes is, “I m not quite sure I know what you mean by that – could you tell me a little more?” Or “I’m sorry, but I’m not sure I understand. Did you say previously that…?” It is important that the interviewer take the blame or failure to understand so as not to appear to be cross-examining the respondent.

Recording the Interview

While the methods used in recording will vary, the interviewer usually writes down the answers of the respondent. Some guidelines can make this task more efficient. Record responses as they are made by the respondent. If you wait until later, you lose much of what is said. If there is a time constraint, the interviewer should use some shorthand system that will preserve the essence of the respondent’s replies without converting them into the interviewer’s paraphrases. Abbreviating words, leaving out articles and prepositions, and using only key words are good ways to do this.

Another technique is for the interviewer to repeat the response while writing it down. This helps to hold the respondent’s interest during the writing and checks the interviewer understands of what the respondent said. Normally the interviewer should start the writing when the respondent begins to reply. The interviewer should also record all probes and other comments on the questionnaire in parentheses to set them off from responses.

Study designers sometimes create a special interview instrument for recording respondent answers. This may be integrated with the interview questions or a separate document. In such instances the likely answers are anticipated, allowing the interviewer to check respondent answers or to record ranks or ratings. However, all interview instruments must permit the entry of unexpected responses.

Interview problems In personal interviewing, the researcher must deal with bias and cost. While each is discussed separately, they are interrelated. Biased results grow out of three types of error: sampling error, non response error and response error.

1. Non response Error In personal interviews, non response error occurs when you cannot locate the person whom you are supposed to study or when you are unsuccessful in encouraging the person to participate. This is an especially difficult problem when you are using a probability sample of subjects. If there are pre designated persons to be interviewed; the task is to find them, and if you are forced to interview substitutes, an unknown but possibly substantial bias is introduced. One study of non response found that only 31 percent of all first calls (and 20 percent of all first call in major metropolitan areas) were completed. The most reliable solution to non response problems is to make callbacks. If enough attempts are made, it is usually possible to contact most target respondents, although unlimited callbacks are expensive. An original contact plus three callbacks should usually secure about 85 percent of the target respondents. Yet in one study, 36 percent of central city residents still were not contacted after three callbacks.

2. Response Error When the data reported differ from the actual data, response error occurs. There are many ways these errors can happen. Errors can be made in the processing and tabulating of data. Errors occur when the respondent fails to report fully and accurately.

3. Interviewer error is also a major source of response bias.

1. The sample loses credibility and is likely to be biased if interviewers do not do a good job of enlisting respondent cooperation.

Perhaps the most insidious form of interviewer error is cheating. Surveying is difficult work, often done by part-time employees, usually with only limited training and under little direct supervision. At times, falsification of an answer to an overlooked question is perceived as an easy solution to counterbalance the incomplete data. This seemingly easy, seemingly harmless first step can be followed by more pervasive forgery. It is not known how much of this occurs, but it should be of constant concern to research directors as they develop their data collection design.

It is also obvious that an interviewer can distort the results of any survey by in-appropriate suggestions, word emphasis, tone of voice, and question rephrasing. These activities, whether premeditated or merely due to carelessness, are widespread. Interviewers can influence respondents in many other ways also. Older interviewers are often seen as authority figures by young respondents, who modify their responses accordingly.

Some research indicates that perceived social distance between interviewer and respondent has a distorting effect, although the studies do not fully agree on just what this relationship is. In the light of the numerous studies on the various aspects of interview bias, the safest course for researchers is to recognize that there is a constant potential for response error. Costs While professional interviewer’s wage scales are typically not high, interviewing is costly, and these costs continue to rise. Much of the cost results from the substantial interviewer time taken up with administrative and travel tasks. Respondents are often geographically scattered,

and this adds to the cost. Repeated contacts (recommended at six to nine per household) are expensive.

In recent years, some professional research organizations have attempted to gain control of these spiraling costs. Interviewers have typically been paid an hourly rate, but this method rewards inefficient interviewers and often results in field costs exceeding budgets. A second approach to the reduction of field costs has been to use the telephone to schedule personal interviews. A third means of reducing high field costs is to use self-administered questionnaires.

1. Telephone Interview

The telephone can be helpful in arranging personal interviews and screening large populations for unusual types of respondents. Studies have also shown that making prior notification calls can improve the response rates of mail surveys. However, the telephone

interview makes its greatest contribution in survey work as a unique mode of communication to collect information from respondents.

When compared to either personal interviews or mail surveys, the use of telephones brings a faster completion of a study, sometimes taking only a day or so for the fieldwork. When compared to personal interviewing, it is also likely that interviewer bias, especially bias caused by the physical appearance, body language, and actions of the interviewer, is reduced by using phones.

Finally, behavioral norms work to the advantage of telephone interviewing: If someone is present, a ringing phone is usually answered, and it is the caller who decides the purpose, length, and termination of the call. There are also disadvantages to using the telephone for research; obviously, the respondent must be available by phone. Usage rates are not as high in households composed of single adults, less educated, poorer minorities, and individuals employed as non-professional, non-managerial workers. These variations can be a source of bias.

A limit on interview length is another disadvantage of the telephone, but the degree of this limitation depends on the respondent’s interest in the topic. Ten minutes has generally been thought as ideal, but interviews of 20 minutes or more are not uncommon.

In telephone interviewing, it is not possible to use maps, illustrations, other visual aids, complex scales, or measurement techniques. The medium also limits the complexity of the questioning and the use of sorting techniques. One ingenious solution to not using

scales, however, has been to employ a nine-point scaling approach and to ask the respondent to visualize this by using the telephone dial or keypad.

Some studies suggest the responses rate in telephone studies is lower than for comparable face-to-face interviews. One reason is that respondents find it easier to terminate a phone interview. Telephone surveys can result in less thorough responses, and those involved by phone find the experience to be less rewarding to them than a personal interview. Respondents report less rapport with telephone interviewers that with personal interviewers. Given the growing costs and difficulties of personal interviews, it is likely that an even higher share of surveys will be by telephone in the future. Thus, it behooves management researchers using telephone surveys to attempt to improve the enjoyment of the interview. One authority suggests:

We need to experiment with techniques to improve the enjoyment of the interview by the respondent, maximizing the overall completion rate, and minimize response error on specific measures. This work might fruitfully begin with efforts at translating into verbal frowns, rising of eyebrows, eye contact, etc. All of these cues have informational content and are important parts of personal interview setting. We can perhaps purposefully choose those cues that are most important to data quality and respondent trust and discard the many that are extraneous to the survey interaction.

2. Self Administered Surveys

The self-administered questionnaire has become ubiquitous in modern living. Service evaluations of hotels, restaurants, car dealerships, and transportation providers furnish ready examples. Often a short questionnaire is left to be completed by the respondent in a convenient location or is packaged with a product.

Mail Surveys

Mail surveys typically cost less than personal interviews/Telephone and mail costs are in the same general range, although in specific cases either may be lower. The more geographically dispersed the sample, the more likely it is that mail will be the low-cost

method. A mail is that we can contact respondents who might otherwise be inaccessible. People such as major corporate executive are difficult to reach in any other way. When the research has no specific person to contact – say, in a study of corporation – the mail

survey often will be routed to the appropriate respondent.

In a mail survey, the respondent can take more time to collect facts; talk with other, or consider replies at length than is possible with the telephone, personal interview, or intercept studies. Mail surveys are typically perceived as more impersonal, providing more anonymity than the other communication modes, including other methods for distributing self-administered questionnaires.

- The major weakness of the mail survey is non-response error. Many studies have shown that better-educated respondents and those more interested in the topic answer mail surveys. A high percentage of those who reply to a given survey have usually replied to others, while large shares of those who do not respond are habitual non-respondents.

- Mail surveys with a return of about 30% are often considered satisfactory, but there are instances of more than 70% response. In either case, there are many non-responders, and we usually know nothing about how those who answer differ from those who do not answer.

- The second major limitation of mail surveys concerns the type and amount of information that can be secured. We normally do not expect to obtain large amounts of information and cannot probe deeply into questions. Respondents will generally refuse to co-operate with a long and/or complex mail questionnaire unless they perceive a personal benefit. Returned mail questionnaires with many questions left unanswered testify to this problem, but there are also many exceptions. One general rule of thumb is that the respondent should be able to answer the questionnaire in no more than 10 minutes.

3. Maximizing the Mail Survey

To maximize the overall probability of response, attention must be given to each point of the survey process where the response may break down, for example:

- The wrong address and wrong postage can result in non-delivery or non-return.

- The letter may look like junk mail and be discarded without being opened.

- Lack of proper instructions for completion leads to non-response.

- The wrong person opens the letter and fails to call it to the attention of the right person.

- A respondent finds no convincing explanation for completing the survey and discards it.

- A respondent temporarily sets the questionnaire aside and fails to complete it.

- The return address is lost so the questionnaire cannot be returned.

Efforts to overcome these problems will vary according to the circumstances, but some general suggestions can be made for mail survey, and, by extension, for self-administered questionnaires using different delivery modes. A questionnaire, cover letter, and return mechanism are sent. Incentives, such as dollar bills, coins or gift coupons are often attached to the letter in commercial studies. Follow-ups are usually needed to get the maximum response. Opinions differ about the number and timing of follow-ups.

5.3 Research Instruments

1. The Questionnaire

A questionnaire can be defined as a group of printed questions which have been deliberately designed and structured to be used to gather information from respondents.

Advantages of using Questionnaire

1. They can be used to reach many people.

2. Save time, especially where they have been mailed to respondents.

3. Cost effective given they can be mailed and one can avoid using interviewers.

4. Questions are standardized and therefore the responses are likely to be the same.

5. Interviewer biases can be avoided when questionnaires are mailed.

6. They give a greater feeling of being anonymous and therefore encourage open responses to sensitive questions.

7. Effective in reaching distant locations where it is not practical to go there.

Disadvantages

1. Questionnaire mailed to respondents may not be returned.

2. The inability to control the context of questions answering and specifically the presence of other people who may fill the questionnaire.

3. A certain number of potential respondents, particularly the least educated maybe unable to respond to written questionnaires because of illiteracy and other difficulties in reading.

4. Written questionnaires do not allow the researchers to correct misunderstanding or answer questions that the respondent may have.

5. Source questionnaire may be returned half filled or unanswered.

Guidelines for Asking Questions

In the actual practice of social research-variables are usually operationalized by asking people questions as a way of getting data for analysis and interpretation. That is always the case in survey research, and such ‘self-report’ date are often collected in experiments,

field research, and other modes of observation. Sometimes the questions are asked by the interviewer, sometimes they are written down and given to respondents for completion (they are called administered questionnaire).

The term questionnaire suggests a collection of questions, but an examination of a typical questionnaire will probably reveal as many statements as questions. That is not without reason. Often, the researcher is interested in determining the extent to which respondents hold a particular attitude or perspective. If you are able to summarize the attitude in a fairly brief statement, you will often present that statement and ask respondents whether they agree or disagree with it – Rensis Likert scale, – a format where respondents are asked to strongly agree, agree, disagree, or strongly disagree, or perhaps strongly approve, approve, and so fourth.

Open-Ended and Closed-Ended Questions

Open-ended questions

The respondent in asked to provide his or her own answer to the questions eg. (‘What do you feel is the most important issue facing Kenya today?) and provided with a space to write in the answer (or be asked to report in verbally to an interviewer)

Closed-ended Questions

The respondents are asked to select an answer from among a list provided by the researcher. Closed-ended questions are very popular because they provide a greater conformity of responses and are more easily processed. Open-ended responses must be coded before they can be processed for computer analysis. This coding process often requires that the researcher interpret the meaning of responses, opening the possibility of misunderstanding and researcher bias. There is also a danger that some respondents will

give answers that are essentially irrelevant to the researcher’s intent. Closed-ended questions can often be transferred directly into computer format. The chief shortcoming of closed-ended questions lies in the researcher’s structuring of responses. In asking about ‘the most important issue facing Kenya, for example, your check-list of issues might omit certain issues that respondents would have said were important.

In the construction of closed-ended questions, the response categories provided should be exhaustive. (They should include all the possible responses that might be expected) – (Please specify …………). Second, the answer categories must be mutually exclusive :

(In some cases you may wish to solicit multiple answers, but these may create difficulties in data processing and analysis later on).

Make Items Clear

Questionnaire items should be clear and unambiguous. Often you can become so deeply involved in the topic under examination that opinions and perspectives are clear to you but will not be clear to your respondents – many of whom have given little or no attention

to the topic. Or if you have only a superficial understanding of the topic, you may fail to specify the intent of your question sufficiently. The question ‘what do you think about the ‘proposed nuclear freeze?’ may evoke in the respondent a counter question: ‘which nuclear freeze proposal?’ Questionnaire items should be precise so that the respondent knows exactly what the researcher wants an answer to.

Avoid Double-Barreled Questions

Frequently, researchers ask respondents a single answer to a combination of questions. That seems to happen most after when the researcher has personally identified with a complex question. For example, you might ask respondents to agree or disagree with a

statement. ‘The United States should abandon its space program and spend the money on domestic programs’. Although many people would unequivocally agree with the statement and others would unequivocally disagree, still others would be unable to answer. Some would want to abandon the space program and give the money back to the taxpayers. Other would want to continue the program but also put more money into domestic programs. These latter respondents could neither agree nor disagree without misleading you.

Respondents must be Competent to Answer

In asking respondents to provide information, you should continually ask yourself whether they are able to do so reliably. In the study of child rearing, you might ask respondents to report the age at which they first talked back to their parents. Quite aside from the problem of defining talking back to parents, it is doubtful if most respondents would remember with any degree of accuracy.

One group of researchers examining the driving experience of teenagers insisted on asking an open-ended question concerning the number of miles driven since receiving a license. Although consultants argued that few drivers would be able to estimate such

information with any accuracy, the question was asked nonetheless. In response, some teenagers reported driving hundreds of thousands of miles.

Questions should be Relevant

When attitudes are requested on a topic that few respondents have though about or really cared about, the results are not likely to be very useful. This point is illustrated occasionally when you ask for responses relating to fictitious persons and issues. In a potential poll conducted, respondents were asked whether they were familiar with each of 15 political figures in the community. As a methodological exercise, a name was made up: John Maina. In response, 9% of the respondents said they were familiar with him. Of those respondents familiar with him, about half reported seeing him on television and reading about him in the newspapers.

When you obtain responses to fictitious issues, you can disregard those responses. But when the issue is real, you may have no way of telling which responses genuinely reflect attitudes and which reflect meaningless answers to irrelevant questions.

Short Items are best

In the interest of being unambiguous and precise and pointing to the relevance of an issue, the researcher is often led into long and complicated items. That should be avoided. Respondents are often unwilling to study an item in order to understand it. The respondent should be able to read an item quickly, understand its intent, and select or provide an answer without difficulty. Provide clear, short items that will not be misinterpreted under those conditions.

Avoid Negative Items

The appearance of a negative item in a questionnaire paves way for early misinterpretation. Asked to agree or disagree with the statement. ‘The United States should not recognize Cuba’, a sizeable portion of the respondents will read over the word not and answer on that basis. Thus, some will agree with the statement when they are in favor of recognition, and other will agree when they oppose it. Any you may never know which is which.

Avoid Biased Items and Terms

The meaning of some one’s response to a question depends in large part on the wording of the question that was asked. That is true of every question and answer. Some questions seem to encourage particular responses more than other questions. Questions that encourage respondents to answer in a particular way are called biased.

Most researchers recognize the likely effect of a question that begins ‘don’t you agree with the president that….’ And no reputable researcher would use such an item. Unhappily the biasing effect of items and terms is far subtler than this example suggests. The mere identification of an attitude or position with a prestigious person or agency can bias responses. The item ‘do you agree or disagree with the recent supreme court decision that…’ would have similar effect. It does not mean that such wording will necessarily produce

consensus or even a majority in support of the position identified with the prestigious person or agency, only that support would likely be increased over what would have been obtained without such identification.

Questionnaire items can be biased negatively as well as positively. ‘Do you agree or disagree with the position of Adolf Hitler when he stated that…..’ is an example. Since 1949, asking Americans questions about China has been tricky. Identifying the country as ‘China’ can still result in confusion between mainland China and Taiwan. Not all Americans recognize the official name: The People’s Republic of China. Referring to ‘Red China’ or Communist China’ evokes negative response from many respondents, though that might be desirable if your purpose were to study anti communist feelings.

Questionnaire Construction

Questionnaires are essential to and most directly associated with surveys research. They are also widely used in experiments, field research, and other data – collection activities. Thus questionnaires are used in connection with modes of observation in social research.

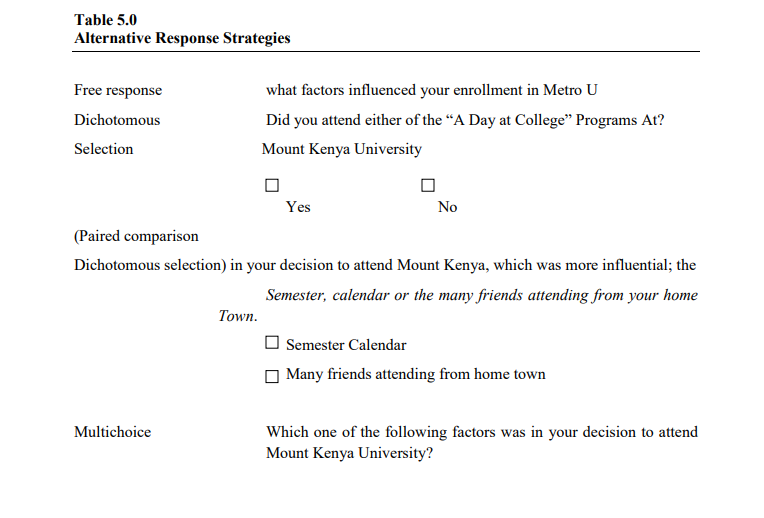

Response Strategies Illustrated

The characteristics of respondents, the nature of topic(s) being studied, the type of data needed, and your analysis plan dictate the response strategy. Example of the strategies described below are found in Table 10.1

Free-Response Questions: also known as open-ended questions, ask the respondent a question, and the interviewer pauses for the answer (which is unaided), or the respondent records his or her ideas in his or her own words in the space provided on a questionnaire.

Dichotomous Response Questions: A topic may present clearly dichotomous choices: something is a fact or it is not; a respondent can either recall or not recall information; a respondent attended or didn’t attend an event.

Multiple-Choice Questions: are appropriate where there are more than two alternatives or where we seek gradations of preference, interest, or agreement; the latter situation also calls for rating questions. While such questions offer more than one alternative answer,

they request the respondent to make a single choice. Multiple-choice questions can be efficient, but they also present unique design problems.

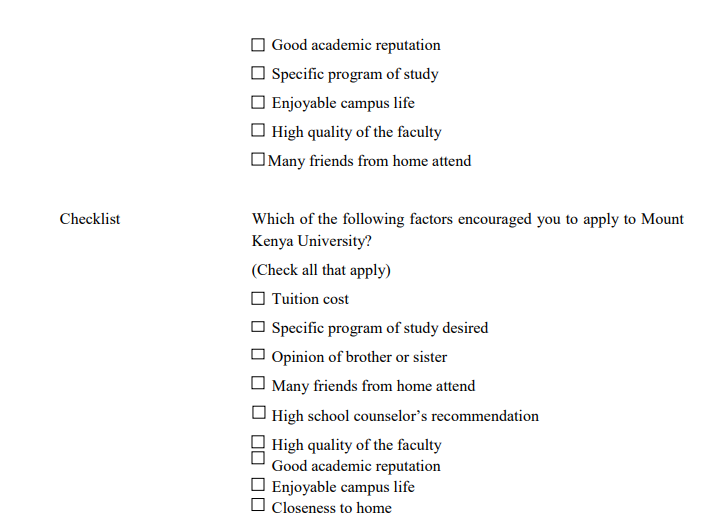

Checklist Strategies

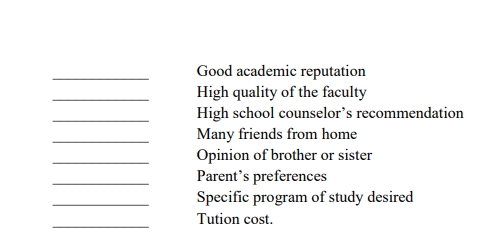

When you want a respondent to give multiple responses to a single question, you will ask the question in one of three ways. If relative order is not important, the checklist is the logical choice. Questions like “Which of the following factors encouraged you to apply to Mount Kenya University (Check all that apply). Force the respondent to exercise a dichotomous response (yes, encouraged; no, didn’t encourage) to each factor presented. Of course you cold have asked for the same information as a series of dichotomous selection questions, one for each individual factor, but that would have been time-consuming. Checklists are more efficient. Checklists generate nominal data.

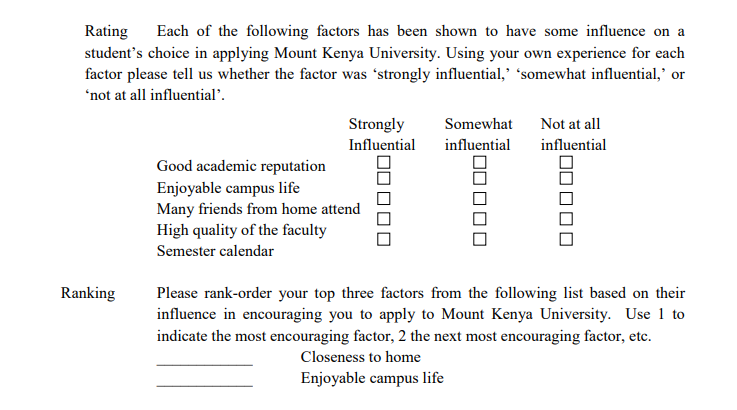

Rating Strategies

Rating questions ask the respondent to position each factor on a companion scale, verbal, numeric, or graphic. ‘Each of the following factors has been shown to have some influence on a student’s choice to apply to Mount Kenya University. Using your own experience, for each factor please tell us whether the factor was ‘strongly influential’, ‘somewhat influential’, or ‘not at all influential’. Generally, rating-scale structures generate ordinal data; some carefully crafted scales generate interval data.

Ranking Strategies

When relative order of the alternatives is important, the ranking question is ideal. ‘Please rankorder your top three factors from the following list based on their influence in encouraging you to apply to Mount Kenya University. Use 1 to indicate the most encouraging factor, 2 the next most encouraging factor, etc. The checklist strategy would provide the three factors of influence, but we would have no way of knowing the importance the respondent places on each factor. Even in a personal interview, the order in which the factors are mentioned is not a guarantee of influence. Ranking as a response strategy solves this problem.

Instructions

Instructions to the interviewer to respondent attempt to ensure that all respondents are treated equally, thus avoiding building error into the results. Two principles form the foundation for good instructions: clarity and courtesy. Instruction language needs to be unfailingly simple and polite. Instruction topics include (1) how to terminate an interview when the respondent does not correctly answer the screen or filter questions, (2) How to conclude an interview when the respondent decides to discontinue, (3) Skip directions for moving between top sections of an instrument when movement is dependent on the answer to specific questions or when branched questions are used, and (4) Telling the respondent to a self-administered instrument about the disposition of the completed questionnaire. In a self-administered questionnaire, instructions must be contained withn the survey instrument. Personal interviewer instructions sometimes are in a document separate from the questionnaire (a document thoroughly discussed during

interviewer training) or are distinctly and clearly marked (high-lighted, printed in a colored ink, or boxed on the computer screen) on the data collection instrument itself.

Conclusion

The role of the conclusons is to leave the respondent with the impression that his or her participation has been valuable. Subsequent researchers may need this individual to participate in new studies. If every interviewer or instrument expresses appreciation for participation, cooperation in subsequent studies is more likely.